Why METEOR Matters for AI Book Translation

METEOR, short for Metric for Evaluation of Translation with Explicit ORdering, is a translation evaluation tool that prioritizes meaning and sentence flow over exact word matches. Unlike BLEU, which relies on strict word-for-word alignment, METEOR uses techniques like stemming, synonym matching, and paraphrasing to better assess the quality of translations. This makes it especially effective for translating books, where capturing the author’s voice, tone, and narrative flow is critical.

Key insights:

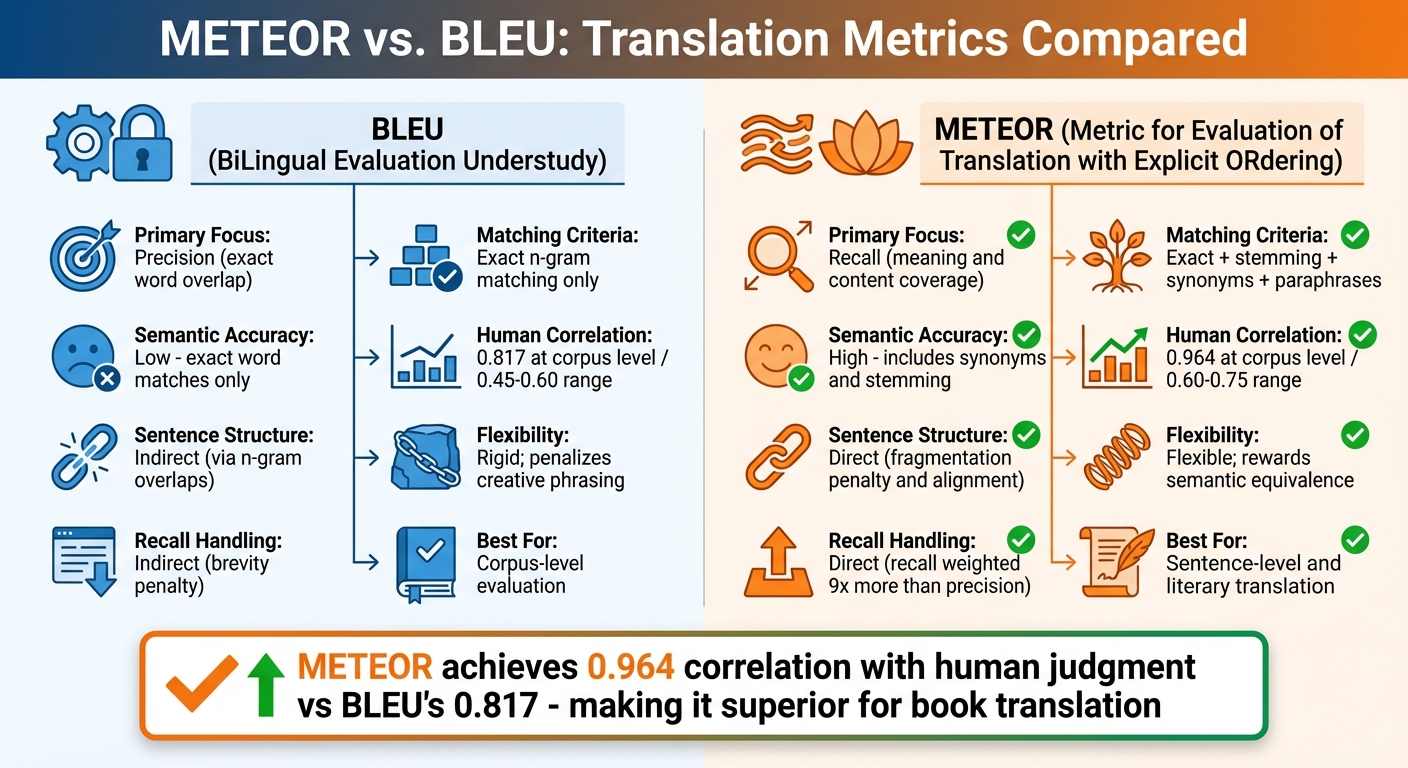

- Why BLEU falls short: BLEU's strict focus on exact word matches penalizes valid alternatives, struggles with synonyms, and fails to evaluate narrative coherence, making it unsuitable for literature.

- How METEOR works: METEOR aligns translations using exact matches, word stems, synonyms, and paraphrases. It prioritizes recall (meaning coverage) over precision and applies penalties for poor word order.

- Performance: METEOR achieves a 0.964 correlation with human judgment at the corpus level, outperforming BLEU's 0.817.

- Impact on book translations: By focusing on meaning and flow, METEOR ensures translations retain the depth and readability of the original text, making it ideal for AI-driven literary translations.

For platforms like BookTranslator.ai, METEOR enables high-quality translations across 99+ languages for as low as $5.99 per 100,000 words, making literature accessible to a global audience.

Problems with Evaluating AI Book Translations

Why BLEU Fails for Long-Form Translations

BLEU (Bilingual Evaluation Understudy), a metric introduced in 2002, relies on strict n-gram matching, which often fails to capture the subtleties of literary translation.

The crux of the issue lies in BLEU's approach: it evaluates quality by matching 1- to 4-word sequences exactly as they appear in a human reference. This rigid method struggles with the creative flexibility required for translating literature. As the NLLB team explains:

"BLEU penalizes valid alternative translations. If the reference says 'the car is red' and the system produces 'the automobile is red,' BLEU penalizes the mismatch even though the meaning is identical" [4].

This inability to recognize synonyms is particularly problematic for books, where word choice often carries significant weight. For example, BLEU treats "big" and "large" as completely different words, even though they mean the same thing. Similarly, it doesn't account for variations like "running", "runs", and "ran", often penalizing translations that are both accurate and creative.

Another core limitation is BLEU's corpus-level design. It was originally developed to handle large datasets, not the sentence-level precision critical for literature. BLEU also lacks the ability to evaluate sentence flow or narrative coherence. As NLLB notes:

"BLEU does not account for fluency or meaning preservation directly - it is purely an n-gram overlap measure" [4].

This means a translation could technically include all the correct words but arrange them in a jumbled, awkward order - and still score well. These shortcomings highlight the need for evaluation methods that prioritize context, coherence, and the overall narrative experience.

Why Context and Meaning Matter in Books

Books are more than just collections of sentences - they're intricate narratives where every word, sentence structure, and stylistic choice plays a role in shaping the reader's experience. BLEU's narrow focus on exact word matches misses this bigger picture, especially when it comes to maintaining narrative flow and coherence.

The semantic understanding gap is particularly glaring. Michael Brenndoerfer points out:

"Two semantically equivalent translations could receive very different BLEU scores depending on their specific word choices" [5].

This creates a problematic incentive for AI systems to chase exact word matches instead of striving for semantic accuracy or natural fluency.

Literary translation demands a balance between precision and recall - not only avoiding errors but also preserving the depth, tone, and emotional resonance of the original text. BLEU heavily emphasizes precision, but books require metrics that measure whether the translation captures the author's intent and narrative flow. Tools like METEOR, which prioritize meaning and flow by weighting recall nine times higher than precision, offer a more fitting approach for evaluating literary translations [1].

sbb-itb-0c0385d

METEOR : A metric for Machine Translation

What is METEOR and How Does It Work?

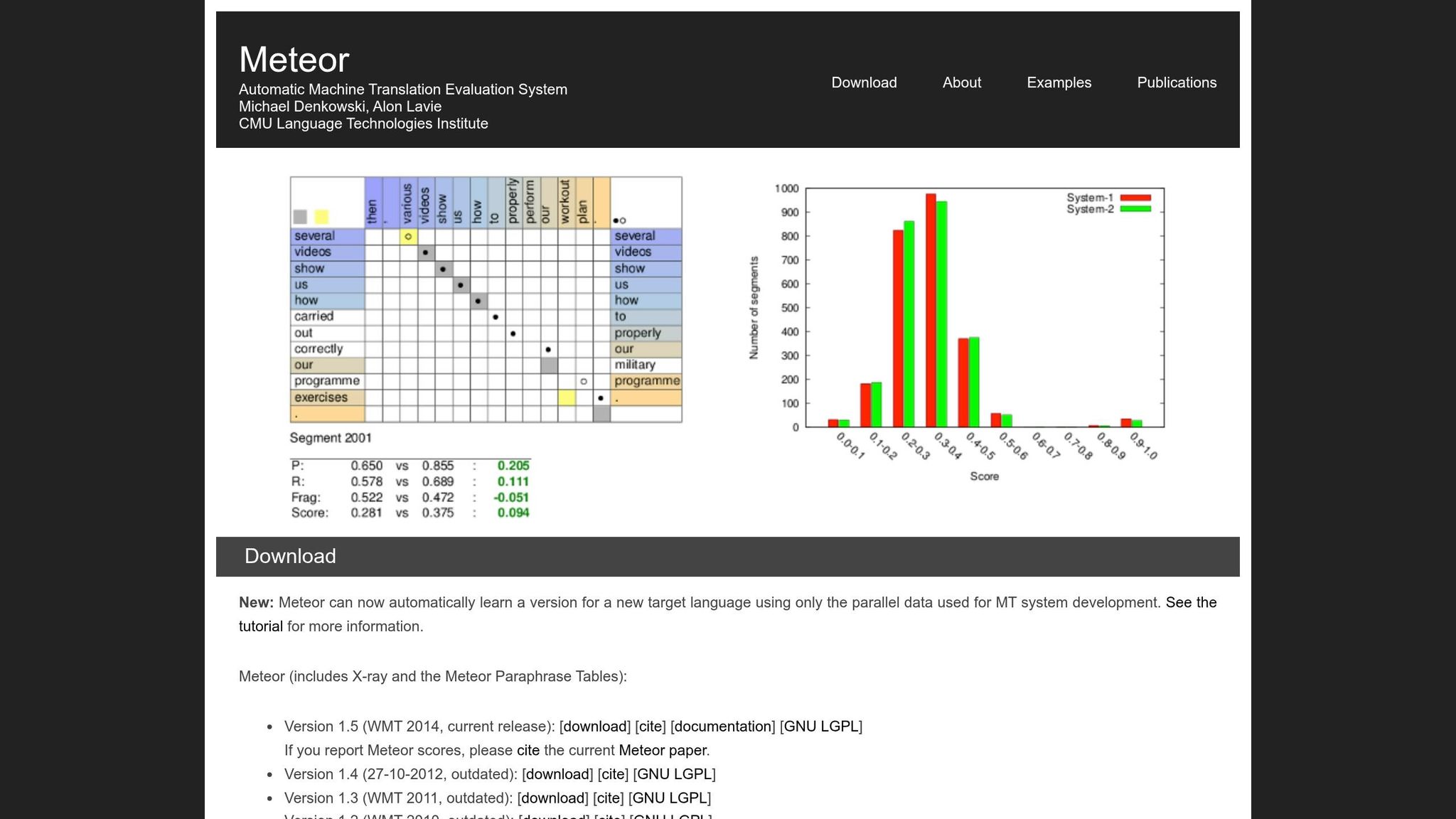

METEOR, short for Metric for Evaluation of Translation with Explicit ORdering, was introduced in 2005 by researchers Satanjeev Banerjee and Alon Lavie at Carnegie Mellon University. It was developed to address some of the limitations of BLEU, particularly its rigid word-for-word matching. METEOR focuses on preserving meaning and natural word order, which makes it especially useful for evaluating translations that need to maintain narrative flow - like book translations.

The metric works by aligning individual words in the candidate translation with those in the reference translation. When there are multiple ways to align the words, METEOR chooses the one with the least number of "crosses" (intersections between mapping lines). This approach helps maintain a more natural word order in the evaluation process [1].

Core Features of METEOR

METEOR stands out because of its tiered matching approach, which goes beyond exact word matching. It uses four sequential modules to evaluate translations:

- Exact matching: Matches identical word forms.

- Stemming: Matches words that share the same root, like "running" and "runs."

- Synonymy: Recognizes words with similar meanings using WordNet.

- Paraphrase matching: Matches phrases with similar semantic content.

This layered approach addresses BLEU's struggle to account for valid word variations and alternative expressions [1][2][6].

METEOR's scoring system combines two key elements. First, it calculates a weighted F-mean of precision and recall, with recall being weighted nine times more heavily than precision. This reflects how humans tend to evaluate translation quality, prioritizing coverage of the original meaning over exact matches [1]. Second, it applies a fragmentation penalty to discourage translations where matched words are scattered or out of order. If the matched words are broken into too many "chunks", the score can be penalized by up to 50%. This ensures that translations with correct words but poor structure - often referred to as "word salad" - receive lower scores [1].

How METEOR Aligns with Human Judgment

Studies show that METEOR correlates with human judgment better than BLEU, achieving correlation coefficients between 0.60 and 0.75, compared to BLEU's range of 0.45 to 0.60 [6].

This stronger alignment is largely due to METEOR's sentence-level focus. While BLEU is designed to assess translations at the corpus level, METEOR evaluates individual sentences or segments. This makes it particularly effective for assessing the flow and coherence needed in book translations [1]. Additionally, METEOR can process up to 500 segments per second per CPU core, making it both efficient and reliable for practical use [2]. Its ability to closely match human judgment has solidified its role in improving AI-driven book translations.

METEOR vs. BLEU: Why METEOR Works Better for AI Book Translation

METEOR vs BLEU Translation Metrics Comparison

Key Advantages of METEOR for Book Translation

When it comes to translating literary works, METEOR stands out as a more effective evaluation metric than BLEU. Its unique alignment methods and focus on meaning make it especially suited for the nuances of book translation.

One of the main differences is how each metric handles semantic accuracy. BLEU relies on exact word matches, which can unfairly penalize translations that use synonyms or alternate word forms - even when the meaning remains intact. METEOR, on the other hand, incorporates stemming and synonym matching. For example, it recognizes that words like "good" and "well" or "runs" and "running" share the same semantic value. This flexibility is essential for literary translations, where diverse vocabulary and creative phrasing are often necessary to preserve the author's style and intent.

Another important distinction is METEOR's emphasis on recall over precision. BLEU prioritizes precision by measuring how many words in the AI-generated translation match those in the reference text. METEOR, however, balances precision and recall, with recall weighted nine times more heavily [1]. This ensures that the translation captures the full meaning of the original text - a critical factor for accurately conveying complex narratives.

METEOR also excels in sentence-level evaluation. While BLEU is tailored for evaluating translations at the corpus level, METEOR is designed to align closely with human judgment on individual sentences or segments. It achieves a maximum correlation of about 0.403 at the sentence level [1]. This makes it particularly effective for assessing the flow and coherence of specific passages, which is key in book translation.

One of METEOR's standout features is its fragmentation penalty, which addresses word order and sentence structure. If matched words in the translation are scattered into too many chunks, the score can drop by as much as 50% [1]. This mechanism ensures that translations maintain a natural and coherent structure - something BLEU often overlooks. By focusing on these details, METEOR helps preserve the original text's nuanced meaning and readability.

Comparison Table: METEOR vs. BLEU

| Feature | BLEU | METEOR |

|---|---|---|

| Primary Focus | Precision (exact word overlap) | Recall (meaning and content coverage) |

| Matching Criteria | Exact n-gram matching | Exact, stemming, synonyms, and paraphrases |

| Semantic Accuracy | Low (exact word matches only) | High (includes synonyms and stemming) |

| Human Correlation | Stronger at corpus level | Strong at both sentence and corpus levels |

| Sentence Structure | Indirect (via n-gram overlaps) | Direct (via fragmentation penalty and alignment) |

| Flexibility | Rigid; penalizes creative phrasing | Flexible; rewards semantic equivalence |

| Recall Handling | Indirect (brevity penalty) | Direct (recall calculation weighted 9x more) |

How METEOR is Used in AI Book Translation Platforms

Ensuring Quality with METEOR

AI-powered translation platforms leverage METEOR to maintain semantic accuracy and uphold the delicate nuances of literary works. The process kicks off with alignment mapping, where the system identifies connections between the AI-generated translation and a reference text. This involves recognizing exact matches, word stems, synonyms, and even paraphrases [2]. Such detailed mapping ensures the translation reflects the original meaning, even if the phrasing differs.

To handle the complexities of different languages, METEOR is configured with language-specific tools like stemmers and paraphrase tables. For example, platforms like BookTranslator.ai, which supports over 99 languages, use these resources to address the unique linguistic structures of diverse languages. Whether it's Romance languages like Spanish and French or more intricate ones like Arabic and Czech, these tools are vital for capturing morphological variations [2].

What sets METEOR apart is its ability to fine-tune parameters. Platforms can calibrate these settings to align with specific evaluation tasks, such as measuring adequacy or maintaining a consistent style. This feature is particularly valuable in literary translations, where preserving the author’s voice and the rhythm of the narrative is essential. Additionally, the system’s fragmentation penalty ensures that sentences flow naturally, avoiding the awkward, disjointed feel of a mere string of correct words. This attention to sentence fluidity is critical for keeping readers absorbed in the story over hundreds of pages.

Beyond improving the quality of translations, METEOR also plays a pivotal role in making literature more accessible to a global audience.

Improving Multilingual Access to Literature

By safeguarding the meaning and depth of the original text, METEOR not only improves translation quality but also helps bring literature to readers in their native languages. Using parallel data, METEOR enables platforms to expand their language offerings without sacrificing quality [2]. This ability to adapt is especially important for readers in underrepresented language markets.

The human-focused evaluation approach ensures that translations feel natural and engaging. For example, platforms like BookTranslator.ai provide translations starting at $5.99 per 100,000 words, making high-quality translations affordable while retaining the story’s narrative charm and cultural subtleties. By prioritizing recall over precision, METEOR captures the richness of the source text, including intricate character arcs and thematic layers that are essential to compelling storytelling.

Conclusion

METEOR is changing the game in AI book translation evaluation by prioritizing semantic accuracy and natural readability. Unlike traditional metrics, METEOR accounts for synonyms, word stems, and paraphrases, achieving an impressive 0.964 correlation with human judgment at the corpus level - significantly higher than BLEU's 0.817 [1]. This ensures that translations retain the author's style, narrative consistency, and subtle cultural elements.

What sets METEOR apart is its recall-weighted scoring combined with a fragmentation penalty, which ensures translations not only capture the full meaning of the original text but also read smoothly. This is especially critical for long-form content, where maintaining coherence and flow across an extensive narrative is essential.

For platforms like BookTranslator.ai, supporting over 99 languages, METEOR's ability to recognize linguistic variations allows for high-quality translations at competitive rates - starting at just $5.99 per 100,000 words. By leveraging parallel data to learn new target languages [2], METEOR opens the door for readers in underserved regions to access literature in their native tongues.

"METEOR functions more like modern voice recognition systems that understand different ways of saying the same thing. It evaluates translations with flexibility, mirroring human judgment." - Iterate.ai [3]

FAQs

Is METEOR enough to judge a book translation’s quality?

METEOR is a useful tool for measuring translation quality, especially when it comes to identifying semantic nuances and linguistic details. However, relying on it alone isn't enough to fully evaluate the quality of a book translation. Pairing METEOR with human evaluations offers a more balanced and thorough way to assess translation quality.

How does METEOR handle idioms and creative phrasing?

METEOR tackles the challenges of idioms and creative phrasing through synonym matching, stemming, and adaptable linguistic evaluation. These tools allow it to grasp subtle, non-literal expressions, ensuring translations preserve both the intended meaning and the original style.

Can METEOR catch consistency issues across an entire novel?

METEOR is capable of spotting consistency issues in a novel by examining semantic similarities and linguistic details throughout the text. This helps ensure that the translation preserves a consistent meaning, tone, and style across the entire book.